The AI documentation Paradox

More words. Less thinking. And nobody is noticing

Something strange is happening in product organizations, and it’s happening fast.

In the span of a few months, PRDs got longer, strategy sections got richer, and competitor analysis that used to take a week now appears in an afternoon. Product managers are producing documentation that would have seemed impossibly thorough two years ago.

And yet, when I talk to teams about how decisions actually get made, the process hasn’t changed much.

The documents are better. The thinking behind them isn’t necessarily deeper.

That’s the paradox worth examining.

The Loop Nobody Talks About

Here is the pattern I see emerging across product teams:

A PM uses AI to research and write a detailed PRD: 20 pages covering market sizing, customer segmentation, competitive landscape, strategic options. It looks rigorous. It *is* thorough, in a certain sense.

Then stakeholders receive the document: A few of them drop it into AI to get a summary. The summary becomes the thing people read. The actual document becomes... evidence that work was done.

Written out plainly:

AI writes the document. AI reads the document. Humans read the summary of what AI wrote.

There is nothing technically wrong with any individual step in that chain. But when you zoom out, something has happened to the human thinking layer in the middle.

The question is not whether AI should assist with documentation. Of course it should. The question is: what is documentation actually for in this new environment?

The Consultant Report Effect

This dynamic has a predecessor.

For years, large organizations hired strategy consultants to produce 80-page reports. The reports were thorough. They covered the market, the competition, the strategic options. And they were expensive, sometimes hundreds of thousands of euros for a single engagement.

But many times the reports served a function that had little to do with their content. They gave everyone in the organization permission to say: we did the research.

The report was a legitimization artifact. It didn’t generate the thinking, it ratified a decision that had largely already been made, or that needed organizational cover to move forward. The real function was psychological, not analytical.

I worked 2 years at BCG, not all missions were like this but many had a conclusion even before we started.

AI has now made this dynamic available to every team, at essentially zero cost.

Where it once took weeks and a six-figure invoice to produce a document that signaled rigor, it now takes an afternoon. The economics changed. The psychology didn’t.

The question isn’t whether you generated the research. It’s whether you understood it, and whether it genuinely changed what you decided.

If the answer is no, you have produced a legitimization artifact. It may still serve a purpose. But calling it “research” or “strategy” is a stretch.

Documentation Inflation Is Real

The underlying mechanism is simple: AI has dramatically lowered the cost of producing words.

When something gets cheaper, you produce more of it. That’s not a problem unique to AI, it’s how every productivity shift works. But in documentation, the signal-to-noise problem compounds quickly.

Think about it in attention terms. A senior stakeholder in a typical product organization reads hundreds of pages of documentation per month. Pre-AI, producing a 20-page PRD required significant effort, which meant it happened sparingly, and readers could reasonably assume that what was written mattered.

Now that 20-page PRD costs the author 1-3 hours. The production cost dropped. The attention cost for the reader didn’t.

The result: documentation inflation. More pages chasing the same finite pool of human attention. And attention, not information, is the actual scarce resource. Remember that you pay attention i.e. you give part of it every time you focus on something but don’t get it back.

This is why the AI writes → AI summarizes loop exists. It’s a rational response to inflation. Readers are protecting their attention with the same tool that’s eroding it.

In any product organization, the cost of not deciding is often higher than the cost of a wrong decision. Documentation that nobody reads is the organizational equivalent of a decision deferred indefinitely: it must be ok since there is so much documentation about it.

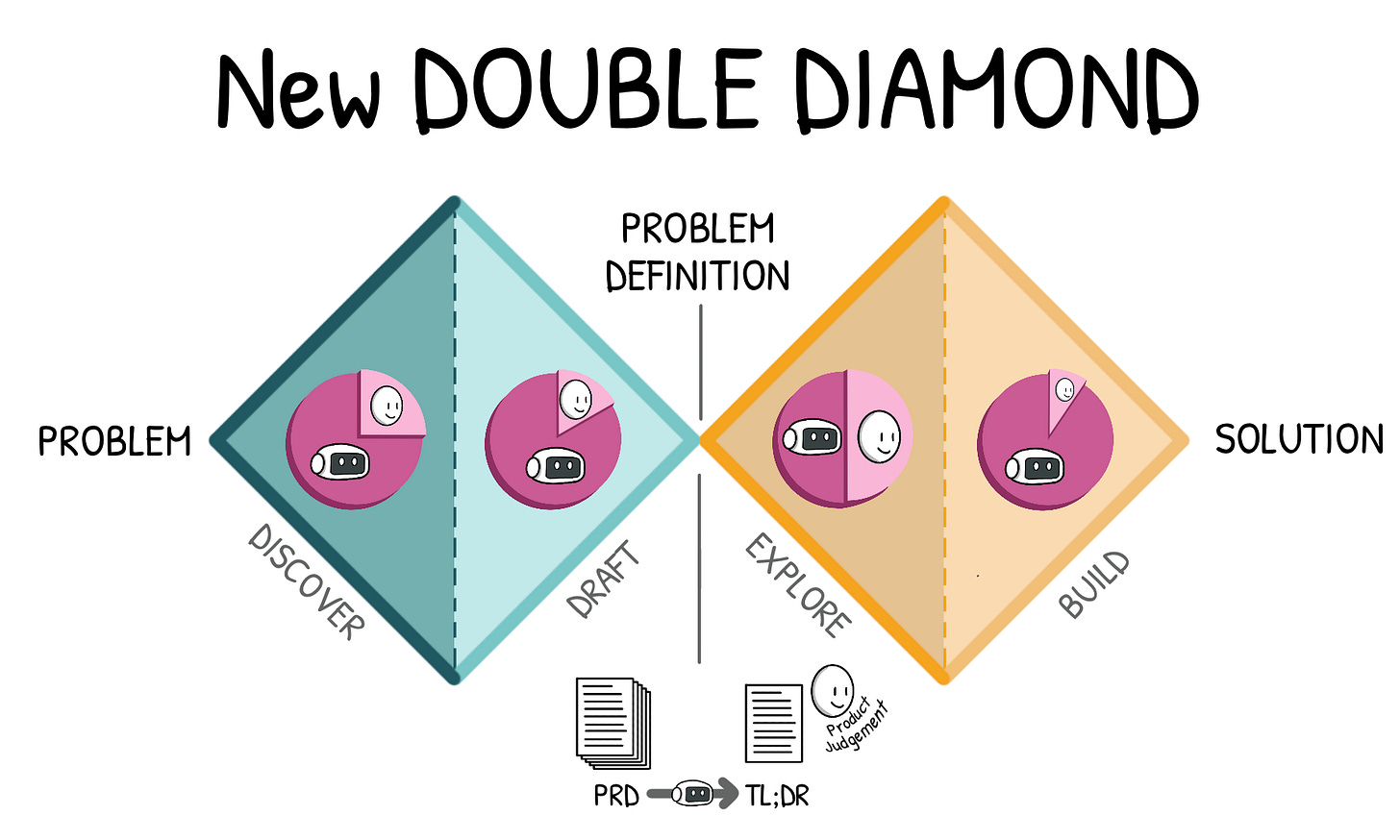

The New Product Double Diamond

Zoom out further and something interesting is taking shape.

The classic double diamond — discover, define, develop, deliver — has been the organizing model for product work for twenty years. AI is reshaping each of its phases simultaneously.

A rough new loop is emerging:

AI Discovers → problem space, market research, customer interview synthesis, competitive mapping

AI Drafts → PRDs, strategy documents, specs, decision memos

AI Compresses → summaries, extracted insights, decision recommendations

AI Explores → prototypes, testing

AI Builds → code, QA

Every stage of product work now has an AI accelerant attached to it. That is genuinely useful. The risk is mistaking acceleration for quality.

A faster double diamond that skips the hard parts — the real customer conversations, the genuine disagreement with stakeholders, the decision that goes against the data — produces worse outcomes at higher velocity. Speed is only valuable if you’re going somewhere meaningful. AI accelerates every step of the process. It does not replace judgment at any of them.

The center of the double diamond becomes even more critical than ever.

Product Judgement to understand what is worth pursuing and what should be forego is potentially the #1 skill of Product Leaders moving forward

How to Write in the AI Era

None of this means written culture is in decline. Good documentation still does three irreplaceable things: it clarifies thinking, aligns stakeholders, and preserves decisions over time. Those functions haven’t changed.

What has changed is who, and what, your documents need to work for.

Product teams are no longer writing only for humans. They are also writing for AI systems that will parse, summarize, and extract decisions. This shifts what good documentation looks like: not longer, better structured.

A few principles worth adopting now:

Lead with the decision, not the narrative

Most documents bury the conclusion somewhere in the middle, after building up the context. Invert it. Start with: Decision: X. Why: Y. Everything else supports that. This serves humans who skim and AI systems that extract.

Separate thinking from evidence

AI mixes these seamlessly, and that’s exactly the problem. A well-structured document distinguishes what we believe from what supports that belief. Claim, then evidence (I point you to Chapter 12 of The Power of Analytics to learn more about Pyramid Principle).

Compress aggressively

Ironically, the AI era makes shorter documents more valuable, not less. When content can be generated in unlimited quantity, attention becomes the scarce resource. For the human and for the AI (under the form of token consumption and context windows). A sharp 2-page strategy memo that forces you to choose your words deserves more respect than a 25-page PRD that signals effort.

Structure for extraction

If AI will summarize your documents, and it will, explicit structure becomes essential. Clear headings, numbered arguments, short paragraphs. Documents should behave more like structured reasoning than essays. Not because essays are bad, but because structured reasoning survives compression better.

The underlying discipline is the same as it has always been: thinking clearly enough that the structure almost forces itself.

The Real Differentiator

As AI increases the volume of documentation, the actual rare skills becomes more valuable, not less.

Clarity of thought and product judgement.

Not the ability to produce thorough research, that’s now table stakes. The ability to look at that research, understand what it actually means, and articulate a position that a room of smart people might disagree with. That is what AI cannot do on your behalf.

The best product documents in the AI era will not be the longest. They will be the ones that make it immediately obvious: what problem matters, what we decided, and why it was the right call.

AI may be generating more words than ever. The question is whether the human thinking behind those words is getting sharper, or whether it’s quietly being outsourced along with everything else.

What’s your experience with documentation in your team? Are people actually reading PRDs, or has AI-to-AI become the default?

PS: It’s Monday — are you on top of last week’s performance?

If you are skipping your Weekly Business Review, lost in dashboards, or unsure why metrics move, you’re not alone.

📘 The Power of Analytics is your guide to choosing the right metrics and building a system to truly and easily understand product performance and customer behavior.

👉 Stop wasting your time and grab your copy here.